Simple Python Code

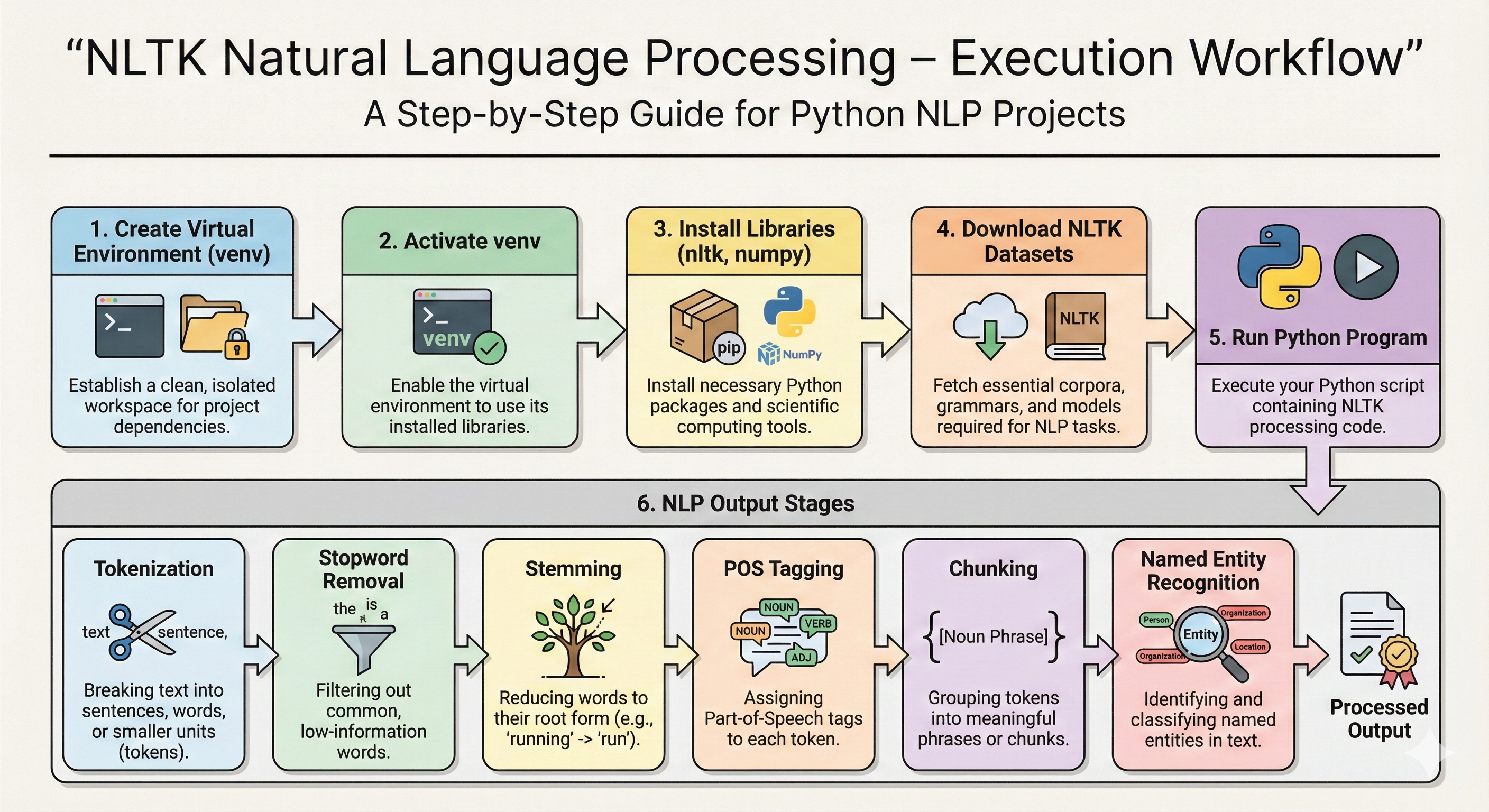

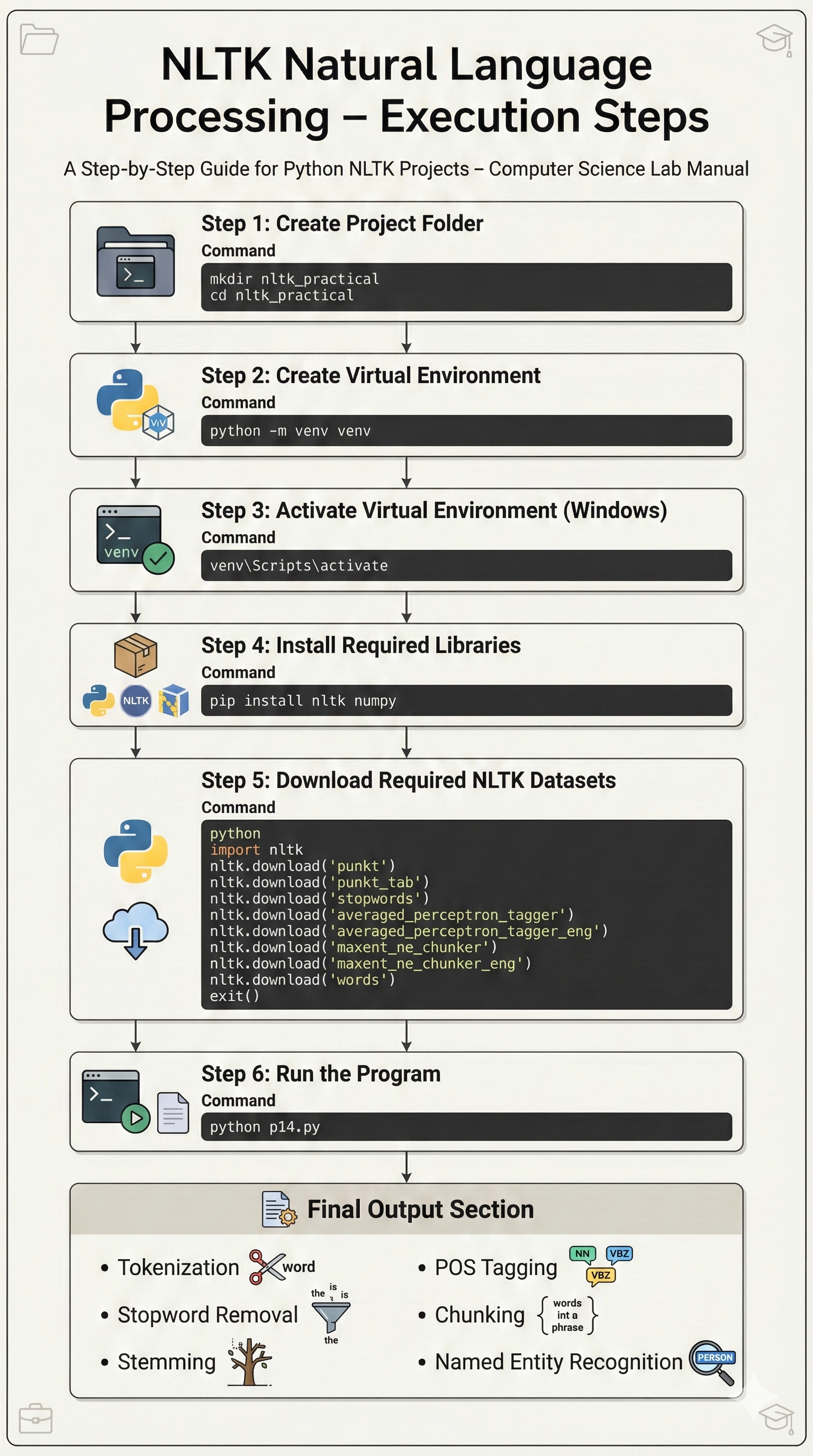

try:

import numpy

import nltk

from nltk.tokenize import word_tokenize

from nltk.corpus import stopwords

from nltk.stem import PorterStemmer

from nltk import pos_tag, ne_chunk

#nltk start

nltk.download('punkt')

nltk.download('punkt_tab')

nltk.download('stopwords')

nltk.download('averaged_perceptron_tagger')

nltk.download('averaged_perceptron_tagger_eng')

nltk.download('maxent_ne_chunker')

nltk.download('maxent_ne_chunker_tab')

nltk.download('words')

#nltk end

except ImportError:

print("NLTK is not installed. Run: pip install nltk numpy")

exit()

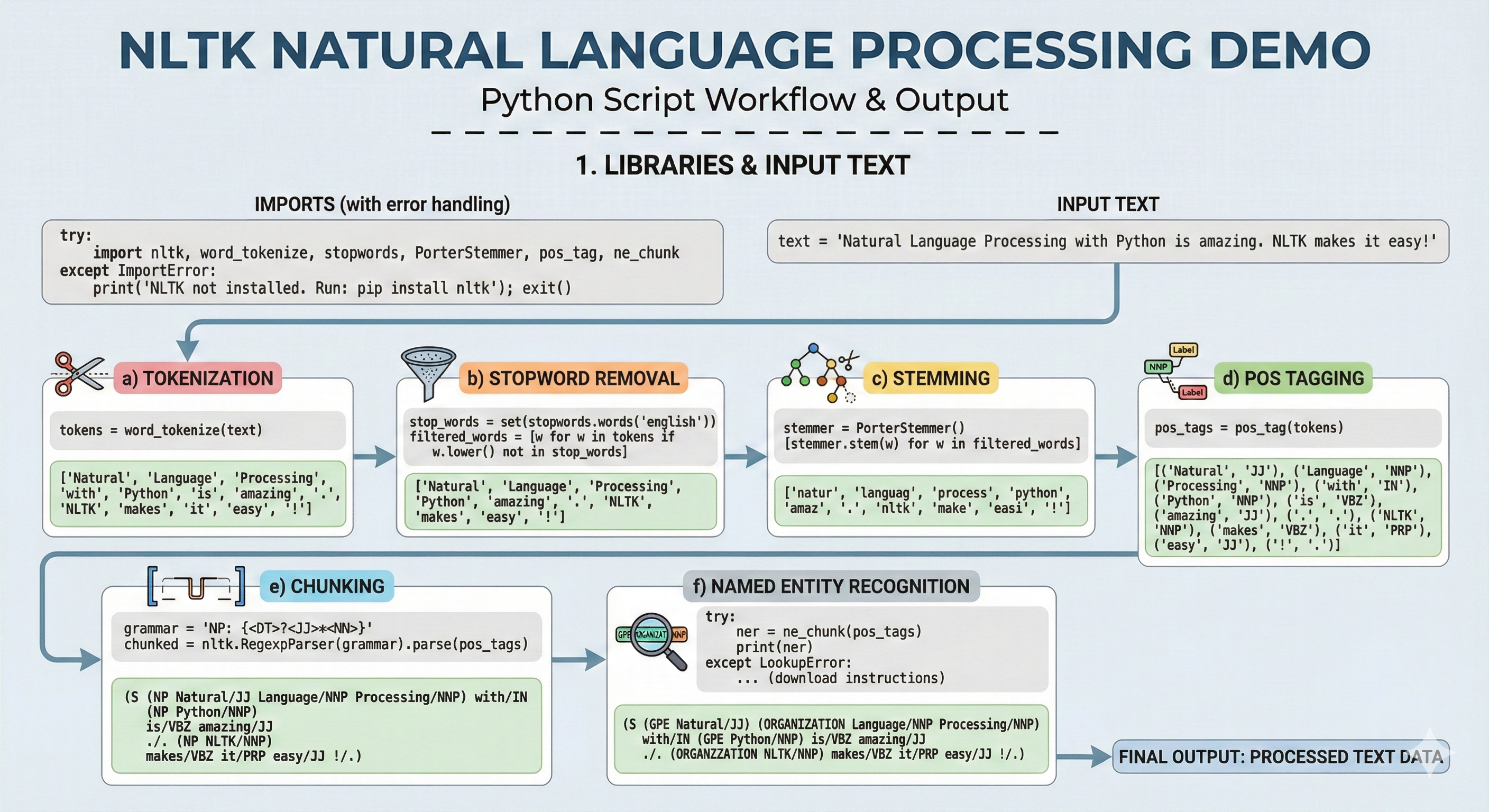

text = "Natural Language Processing with Python is amazing. NLTK makes it easy!"

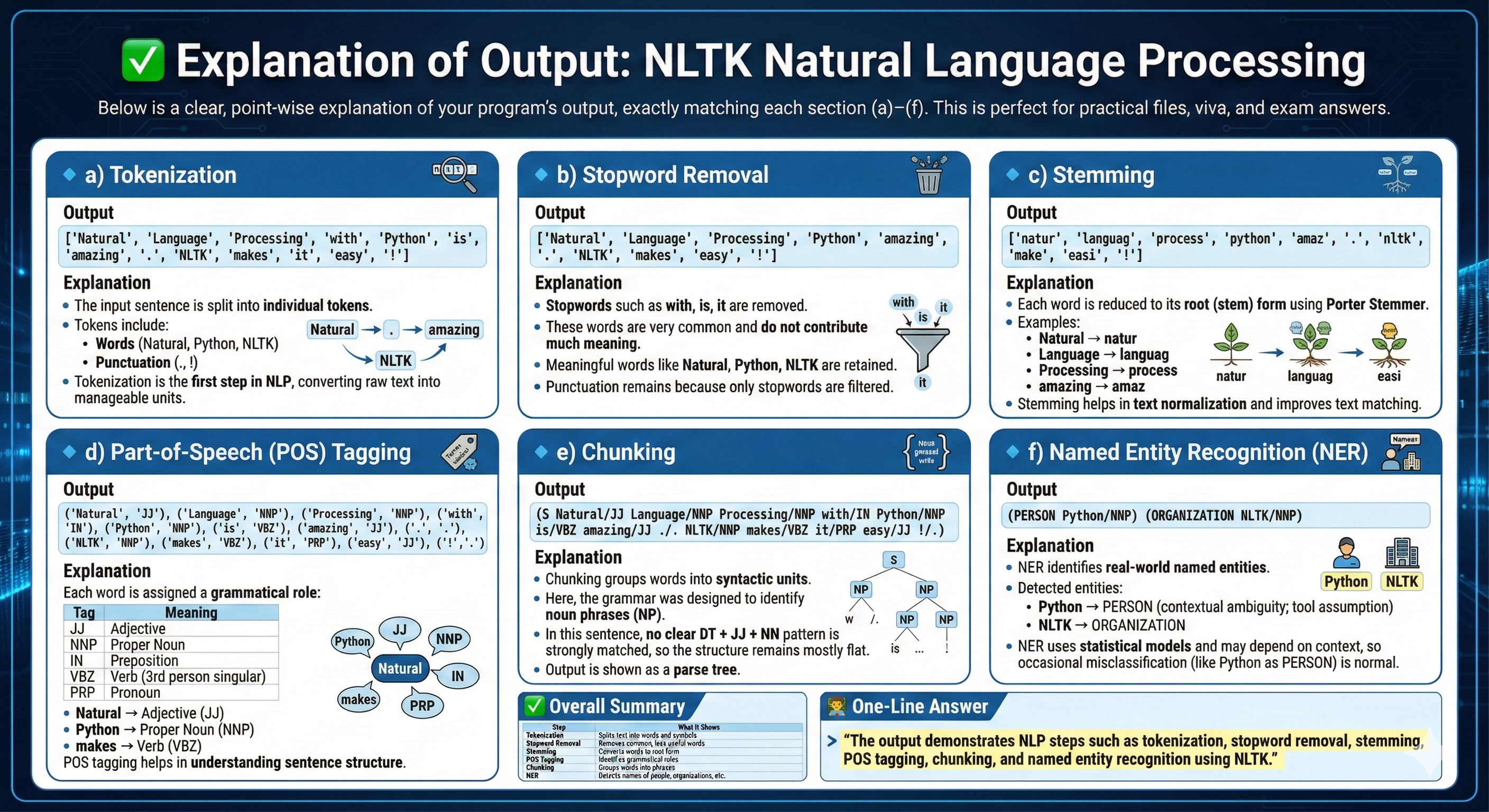

# a) Tokenization

print("a) Tokenization:")

tokens = word_tokenize(text)

print(tokens, "\n")

# b) Stopword Removal

print("b) Stopword Removal:")

stop_words = set(stopwords.words("english"))

filtered_words = [w for w in tokens if w.lower() not in stop_words]

print(filtered_words, "\n")

# c) Stemming

print("c) Stemming:")

stemmer = PorterStemmer()

print([stemmer.stem(w) for w in filtered_words], "\n")

# d) POS Tagging

print("d) POS Tagging:")

pos_tags = pos_tag(tokens)

print(pos_tags, "\n")

# e) Chunking

print("e) Chunking:")

grammar = "NP: {<DT>?<JJ>*<NN>}"

chunked = nltk.RegexpParser(grammar).parse(pos_tags)

print(chunked, "\n")

# f) Named Entity Recognition

print("f) Named Entity Recognition:")

try:

ner = ne_chunk(pos_tags)

print(ner)

except LookupError:

print("NER data error.")

Advanced Python Code

try:

import nltk

from nltk.tokenize import word_tokenize

from nltk.corpus import stopwords

from nltk.stem import PorterStemmer

from nltk import pos_tag, ne_chunk

except ImportError:

print("NLTK is not installed. Run: pip install nltk")

exit()

text = "Natural Language Processing with Python is amazing. NLTK makes it easy!"

# a) Tokenization

print("a) Tokenization:")

tokens = word_tokenize(text)

print(tokens, "\n")

# b) Stopword Removal

print("b) Stopword Removal:")

stop_words = set(stopwords.words("english"))

filtered_words = [w for w in tokens if w.lower() not in stop_words]

print(filtered_words, "\n")

# c) Stemming

print("c) Stemming:")

stemmer = PorterStemmer()

print([stemmer.stem(w) for w in filtered_words], "\n")

# d) POS Tagging

print("d) POS Tagging:")

pos_tags = pos_tag(tokens)

print(pos_tags, "\n")

# e) Chunking

print("e) Chunking:")

grammar = "NP: {<DT>?<JJ>*<NN>}"

chunked = nltk.RegexpParser(grammar).parse(pos_tags)

print(chunked, "\n")

# f) Named Entity Recognition

print("f) Named Entity Recognition:")

try:

ner = ne_chunk(pos_tags)

print(ner)

except LookupError:

print("NER data error.")